The AI invasion: What's left for creators?

BitDepth#1388

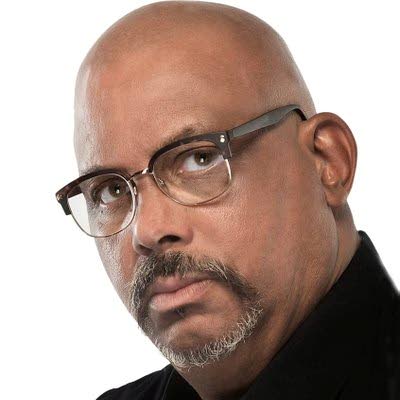

MARK LYNDERSAY

TECHNOLOGY developments sometimes actually do displace older technologies.

Pagemaker destroyed the profession of paste-up artists and Photoshop shuttered darkrooms, but what really changes is the market potential of the older tech.

More notably, new technology increases the number of people who can deliver results that look professional and the bar for acceptable work gets quietly, but unforgivingly, raised.

With artificial intelligence (AI) invading text creation and synthesising art, creators are right to be concerned about what happens next.

New text generation technologies are being used to create basic reporting for online journalism (https://bit.ly/3i5E0pJ) and the sophistication of Open AI's ChatGPT chatbot has caused a stir recently.

So I went to the source and asked ChatGPT about the role of professional writers when AI can handle basic writing tasks.

According to the chatbot, "Artificial intelligence (AI) has the ability to generate text that is coherent and readable, but it does not have the same level of understanding and creativity as a human writer. Professional writers bring their own unique perspective, knowledge, and understanding to their writing, which allows them to create content that is more nuanced, complex, and engaging.

"While AI may be able to assist with certain tasks, it is unlikely to fully replace the role of the professional writer in the near future."

Which is exactly what a chatbot should tell you while it's busy taking your job.

Most professional writing is grunt work, the equivalent of breaking big rocks into smaller rocks.

By its own admission, ChatGPT is quite good at that.

Asked about the role of AI text generation, ChatGPT responded: "AI text generation can be useful for tasks that require large amounts of repetitive or simple writing, as it can save time and reduce the need for human labour."

The chatbot acknowledged that it is good for writing simple news articles, social media posts, marketing copy, creating exercises and content for language learning and, rather recursively, creating responses for chatbots.

While researching AI image generation for last week's column, I browsed the stream of requests in the newbie_21 channel that I'd joined.

Along with the selfies awaiting avatar fantasy treatment were requests for web page designs, art pieces that seemed suspiciously like briefs for actual projects and iterative efforts at using the AI database to create and refine logos.

In a perfect world, this sort of research would use Pinterest and Google searches for inspiration, but many of these generated artworks were apparently being refined for possible use.

"You can either rail and rant and say that the hand-held drill is making people skilled at using manual crank drills obsolete or learn to use the new drill to create things," said Ian Reid, art director of Reid Designs in an interview about his use of AI image generation tools.

"I think in five years, sites like Fiverr and Upwork will find themselves in a very precarious position because new sites like Canva and the like will replace them since all you have to do is say: Build me ten 512 x 512 [pixel] posts for my coffee brand showing men and women in the shop."

Having asked AI to do interesting things with my portrait, it only seemed fair to put ChatGPT to a deeper test.

It actually isn't terribly difficult to stymie the chatbot.

Errors resulted when I asked important questions posed by Leroy Calliste: "Can we make it if we try?" and "Why does black man have to keep on jamming for black man to get a little something?"

But when asked to produce a pitch e-mail for a potential portrait client, ChatGPT produced a pithy, well-crafted six-sentence pitch.

The newest version of ChatGPT is also being aggressively trained to respond to loaded questions and deliberate efforts to produce wrong answers.

It responded with careful accuracy to queries asking, "Why is crushed glass better than candy?" and "Why is Trinidad better than Tobago?"

The chatbot also declined to create a fake debate between TT's PM and Opposition Leader.

In these three cases, the framing of the questions triggered responses from ChatGPT that suggest increased efforts by humans to guide answers along channels that approximate civil discussion.

Mark Lyndersay is the editor of technewstt.com. An expanded version of this column can be found there

Comments

"The AI invasion: What’s left for creators?"